Introduction

ComplianceWise faced this exact challenge with their Grub platform. Their data showed that the compliance questionnaire alone was responsible for 46% of the total time spent in the application and caused 76% of "dropped baskets" (abandoned sessions). They needed a way to maintain rigorous regulatory standards while drastically speeding up the process for accountants.

At 25Friday, we partnered with ComplianceWise to design and implement Grub AI. This intelligent agent automates the collection of facts from dossiers and documents, providing evidence-backed suggestions. This automation cuts 80% of repetitive work, ultimately reducing the customer onboarding timeline by 50%.

The Challenge: The Contextual Data Trap

The core challenge in automating AML checks isn't just processing data; it's understanding context. Junior accountants often lack the experience to answer subjective questions like "Why did this client engage our firm?"

To determine if a client is compliant, the system must synthesise information from three distinct sources:

The Structured Context: The explicit answers, legal entity structure, UBOs (Ultimate Beneficial Owners), and SBI codes confirmed in the client dossier.

Unstructured Documents: Deeds, statutes, and extracts uploaded during the process.

Institutional Knowledge (Similar Cases): Knowing how similar situations were resolved in other dossiers to ensure consistency

A standard rules-based engine fails here. ComplianceWise needed a system capable of holistic reasoning, a system like a "Document Wizard", that could read files and fill out the questionnaire automatically.

The Solution: A Retrieval-Augmented Generation (RAG) Engine

To solve this, 25Friday architected Grub AI, a solution based on the Retrieval-Augmented Generation (RAG) pattern. The system does not replace the accountant’s assessment; instead, it acts as an intelligent assistant that pre-processes every check, synthesising vast amounts of data into proposed answers with review citations.

The architecture relies on intelligent cross-referencing implemented by leveraging Semantic Search and Proximity.

What is Semantic Search & Proximity?

Unlike traditional keyword search (which looks for exact word matches), our solution converts data into mathematical vector lists of numbers representing the meaning of the text. By calculating the "distance" between these vectors (Proximity), the system can identify concepts that are semantically related even if they don't share the same keywords. This allows the AI to "connect the dots" between a current risk flag and a historically similar case, ensuring the advice is consistent with the firm's past decisions.

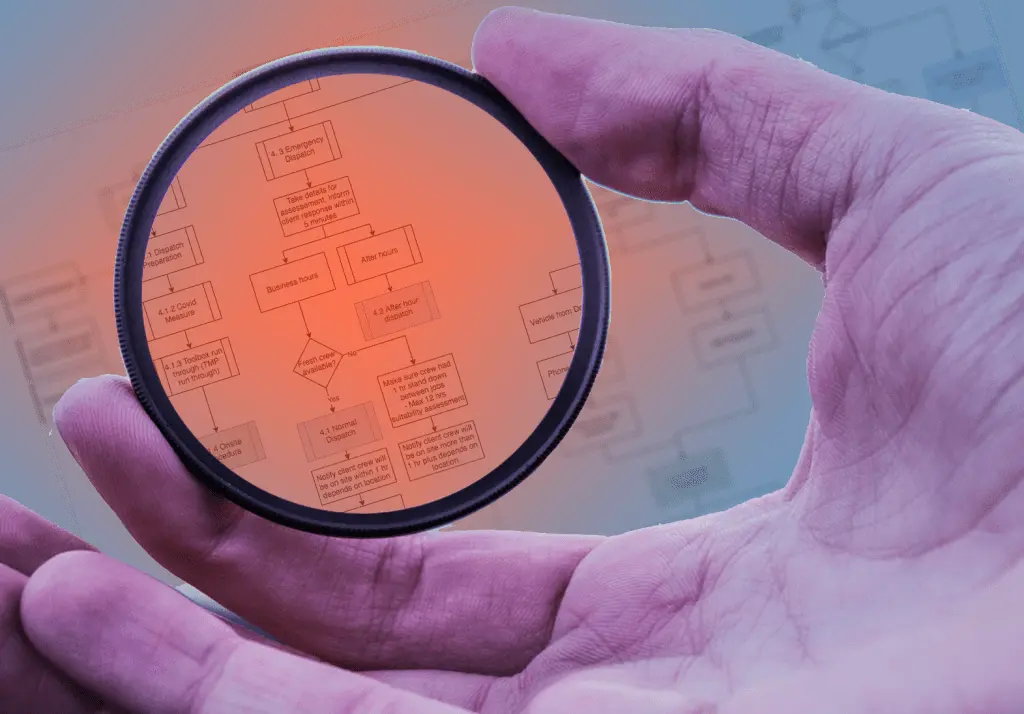

Architectural Deep Dive: The AI Workflow

The core of the solution is a Python-based orchestrator that manages the flow of information between the data sources, the knowledge base, and the Large Language Model (LLM).

Here is how the system processes a compliance profile:

1. Profile Construction & Context Vectorisation

The process begins when the user fills out the Grub Client Dossier. As they input details such as the legal entity structure, operating countries, and key stakeholders, the system packages and tokenises this context. A "Company Context Object" is created and stored in the VectorDB, creating a searchable profile.

2. The Document Wizard (Ingestion & Chunking)

Simultaneously, the Document Wizard processes unstructured data. When an accountant uploads files, the system splits them into semantic "chunks". These chunks are embedded and indexed in the knowledge base, linking specific paragraphs of legal text to the company's profile. This feature alone cuts out 80% of the manual effort of reviewing documents.

3. Intelligent Retrieval (The Semantic Query)

When a specific compliance question arrives, the Python Orchestrator gathers the three key pillars of evidence:

Current Context: The specific profile data of the client (Entity, UBOs, etc.).

Document Evidence: The specific document chunks with the lowest semantic distance to the question.

Similar Case Hints: The system queries the VectorDB for previously completed dossiers that match the current company's attributes.

4. The Reasoning Engine (OpenAI)

Once the evidence is gathered, the Orchestrator constructs a comprehensive prompt sent to OpenAI (acting as a subprocessor). The LLM analyses the holistic context, cross-references the document data against the application claims, and generates a proposed answer. Crucially, it provides reasoning and citations, allowing the accountant to verify why the suggestion was made.

5. Human-in-the-Loop Delivery

The AI-generated suggestion is pushed back to the frontend. The accountant can review the pre-filled questionnaire, edit the suggestions if necessary, and complete the dossier.

The Tech Stack

Compliance Wise used a modern, decoupled architecture to ensure scalability and separation of concerns:

Visualisation & Context: Angular + Java handling the user interface and building the initial company context structures.

Knowledge Base: Python + LangChain + VectorDB to handle document chunking, embeddings, and semantic storage.

Inference Engine: Python + LangChain + OpenAI to handle the reasoning logic and answer generation.

The Results

By implementing this custom AI architecture, ComplianceWise transformed a manual bottleneck into a competitive differentiator.

The system provides consistent answers derived from intelligent cross-referencing, directly addressing the 46% of time accountants previously wasted on the questionnaire. The resulting 50% reduction in onboarding timelines and 80% reduction in repetitive document work have allowed firms to handle more clients without increasing staff.